Fully Scalable ETL Solution

Sesame Software helps you combine structured and unstructured data from many sources, placing them in the destination of your choice.

Extract

Easily extract data from its original source in another database or an application.

Transform

Transform data by cleaning it up, deduplicating it, combining it, and preparing it to load.

Load

Seamlessly load the data into the target database of your choice in a matter of minutes.

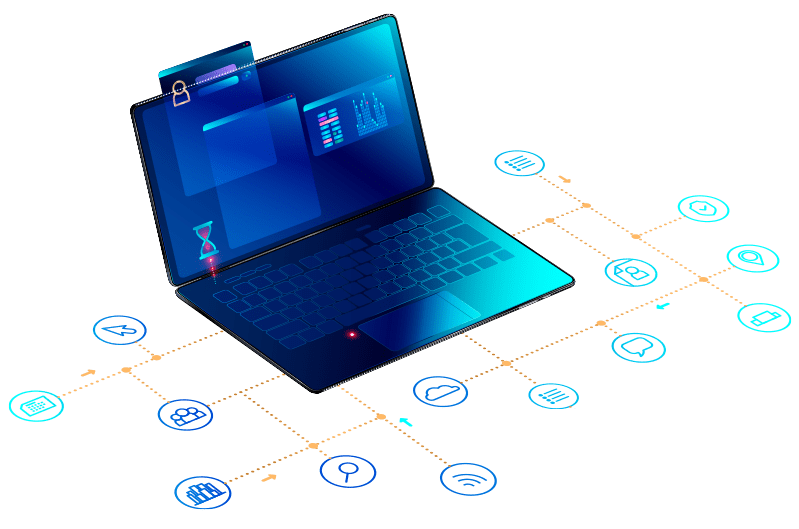

Effortless Integration & Enhanced Analytics

Our ETL solution acts as the bridge, seamlessly extracting data from multiple sources, transforming it into a usable format, and loading it into your target systems for streamlined analysis.

Break Down Data Silos

Eliminate data fragmentation and unify your information in a single, accessible location. Choose your destination – Oracle ADW, Microsoft Azure, among others – and gain seamless control over your valuable data assets.

Faster Insights, Faster Decisions

Our solution transforms your data into optimized table structures, ready for immediate analysis with your preferred BI tool. Unlock hidden insights and gain a complete view of critical business data in record time.

Always Fresh, Always Informed

Our automated, incremental data synchronization ensures your data is constantly refreshed at the frequency you define. Make informed decisions based on the most up-to-date information available.

The Power of ETL

Say goodbye to dirty data. Our ETL solution ensures your data is clean, consistent, and ready for reliable analysis.

Stop waiting for data preparation. Automated ETL processes accelerate the delivery of valuable business insights.

Eliminate the need for manual data manipulation, saving your team time and resources.

As your data volume grows, our ETL solution scales effortlessly to handle increasing data demands.

Why Choose Sesame Software ETL?

Sesame Software ETL can automate your data transformation journey and empower you to make data-driven decisions with confidence.

Seamless Data Loading

Load your transformed data into your data warehouse, data lake, or other analytics platforms for immediate insights generation.

Pre-Built Connectors

Connect to a wide range of popular data sources with our extensive library of pre-built connectors, saving you valuable development time.

Enhanced Data Governance

Maintain control and ensure data quality with robust data governance features.

Robust Scheduling & Monitoring

Automate your ETL workflows and check performance with comprehensive scheduling and monitoring tools.

Trusted by the Best in the Business

“Once you have Relational Junction set up, it just works without having to do much more than the equivalent of regular oil changes.”

– Buzztime

“The process has been very easy to understand and we feel confident that the low maintenance of this service will save us time, money, and resources.”

-Clickstop

ETL Resources

Learn more about how ETL can help your organization grow.

How to Prevent Deadlocks and Data Failures During Large-Scale Transfers

At Sesame Software, preventing deadlocks and data failures isn’t just theory - it’s a practice we’ve refined for over three decades. Our platform was built specifically to tackle the complex challenges of large-scale data transfers, ensuring your...

Migrating from Pardot? Here’s How to Keep Your Data Intact

Switching from Pardot to a new marketing platform can feel overwhelming - especially when it comes to your data. Pardot (now known as Marketing Cloud Account Engagement) is designed for B2B marketing, but as your business evolves, you may find that...

How to Back Up Salesforce Data Using Sesame Software

Backing up your Salesforce data isn’t just a precaution—it’s a core part of any resilient data strategy. Whether you're preparing for accidental deletions, data corruption, or compliance audits, having a reliable backup solution can save you time,...

Stop operating in silos.

Are you ready to gain control of the full potential of your data and achieve consistency across your orgnaization?

Meet with us today to get your data moving in minutes and transform your data management strategy.